Part 1 covered UTM tracking. Part 2 covered GA4 admin settings and key-event hygiene. If those are wrong, nothing downstream is trustworthy.

This post is Part 3: the data quality layer. You can have clean UTMs and sensible admin settings and still feed Google Ads optimization models garbage. Tags break. Triggers misfire. Events duplicate. Referral spam and bot traffic creep in. Old conversion definitions linger in the UI while nothing fires in production.

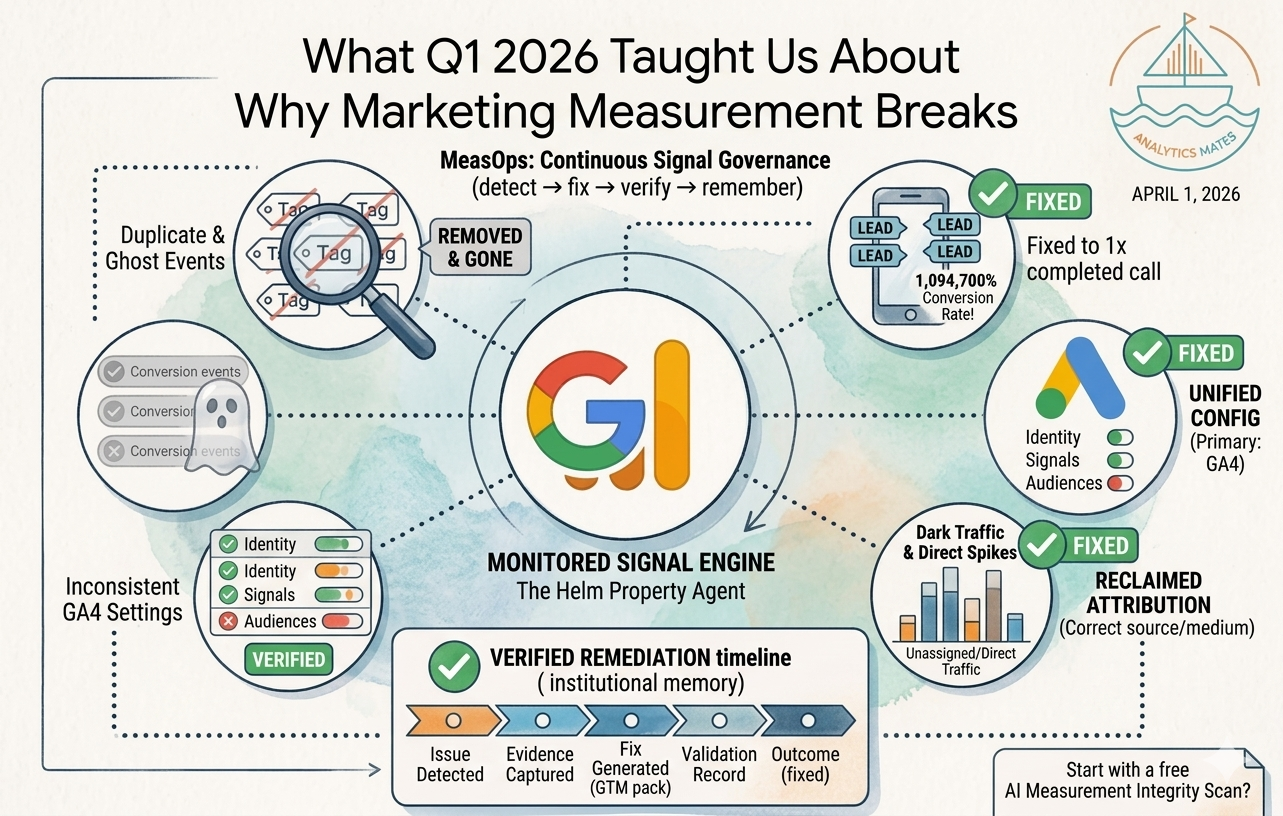

That decay over time is what we call marketing signal drift. A one-time audit fixes a snapshot. Signal governance is the practice of catching drift before it shows up on a P&L.

Why signal drift beats “we ran an audit last quarter”

Smart Bidding does not care that your documentation is pretty. It consumes the conversion events and revenue signals you send. When those signals are duplicated, stale, or polluted, the algorithm learns the wrong economics.

Static audits are useful. They are not sufficient. Measurement operations means revisiting the same questions on a cadence that matches how often your site, tags, and campaigns change.

Three data quality checks that belong in every GA4 review

Use the same 90-day window we use in Parts 1 and 2. Short windows hide structural problems.

1. Duplicate and implausible events (over-reporting)

The most dangerous failure mode for paid media is not under-counting leads. It is over-counting conversions that never happened at human scale.

We recently completed a paid media measurement integrity review for a national home services brand. In GA4, the dial800_call key event showed a 1,094,700% conversion rate per user: 10,947 conversions attributed to one user in 90 days. That pattern indicates severe event duplication or misconfiguration, not a phone system that outperformed physics.

When every “conversion” fires multiple times per visit-or fires without the business outcome you think you are measuring - Google Ads is not optimizing toward real customers. It is optimizing toward noise. tCPA and Max Conversions do not forgive that.

What to do: For each imported key event, compare event volume to users and to business reality. Flag any key event over 100% conversions-per-user. Trace the implementation (GTM triggers, vendor tags, data layer) until the count matches the definition of a conversion.

2. Ghost conversions (configured but inactive)

GA4 properties often accumulate key events and Google Ads conversion actions long after the site or the funnel changed.

In the same review, 18 key events were configured in GA4, but only 5 had meaningful volume in the last 90 days. The rest were effectively ghosts: still declared, not representing live behavior.

Platforms and humans both suffer. Analysts report on a menu of events that do not reflect current journeys. Ad platforms receive mixed signals about what “counts” as success.

What to do: Export your key-event list. Filter to the last 90 days of conversions per event. Archive or demote anything that is not firing. Align Google Ads primary conversions to that shortened, honest list.

3. Bot traffic, spam referrals, and session logic that distort rates

Spam referrals and unchecked bot traffic inflate sessions and deflate conversion rates. You are not “bad at creative.” Your denominator is lying.

Pair that with session and attribution checks: self-referrals, (not set) landing pages, and direct traffic spikes that are really mis-tagged or mis-attributed paid sessions. Those issues belong in the same pass as duplicate events... they are all ways the story GA4 tells stops matching money.

What to do: Review referral exclusions and unwanted traffic. Compare Explorations or standard reports for session source/medium, landing page, and event counts per session when something looks off. Fix tagging before you redecorate campaigns.

Signals beyond GA4: AI visibility

Measurement integrity is not only about what happens inside GA4. Discovery is shifting toward AI-generated answers; brands need to know whether they show up in those surfaces, not only whether a click converted.

We ship an AI Visibility experience in The Helm to help teams reason about answer-engine and LLM-driven discovery alongside analytics work. You can open it here: https://app.analyticsmates.com/ai-visibility.

It does not replace fixing duplicate call events. It extends the idea that governance applies to every channel where your brand is supposed to earn attention.

Where we are taking the product

This series mirrors how we built Paid Media Measurement Integrity reviews in The Helm: workstreams for GA4 configuration, conversion signal trust, data quality and optimization risk, and attribution governance. The point was never a PDF trophy. It was a repeatable operating model.

We are evolving The Helm from a static audit artifact into measurement operations: a property-level agent connected to GA4 that watches for signal drift ... broken conversions, missing events, and anomalies ... recorded on a property timeline so you fix issues before spend compounds the mistake.

Behind the scenes, we are also wiring internal ops context (client history, property facts, meeting prep) so recommendations stay grounded in how each business actually runs. The blog stays focused on what you can audit this week; the product is where we automate the recurrence.

Pre-spend checklist (data quality add-on)

Add these to the Part 2 checklist:

Data quality

- No key event showsover 100%conversions per user in 90 days without a documented reason

- Configured key eventsmatchevents that actually firein the last 90 days; ghosts removed or demoted

- Referral spam / bot traffic reviewed;directandself-referralanomalies investigated, not ignored

Related posts:

- How to Audit Your Paid Media Measurement in 2026: Start with UTM Tracking

- 6 GA4 Settings Every Paid Media Manager Should Audit Before Scaling Spend

These checks are part of how we run paid media measurement integrity work in The Helm.

See Article Images