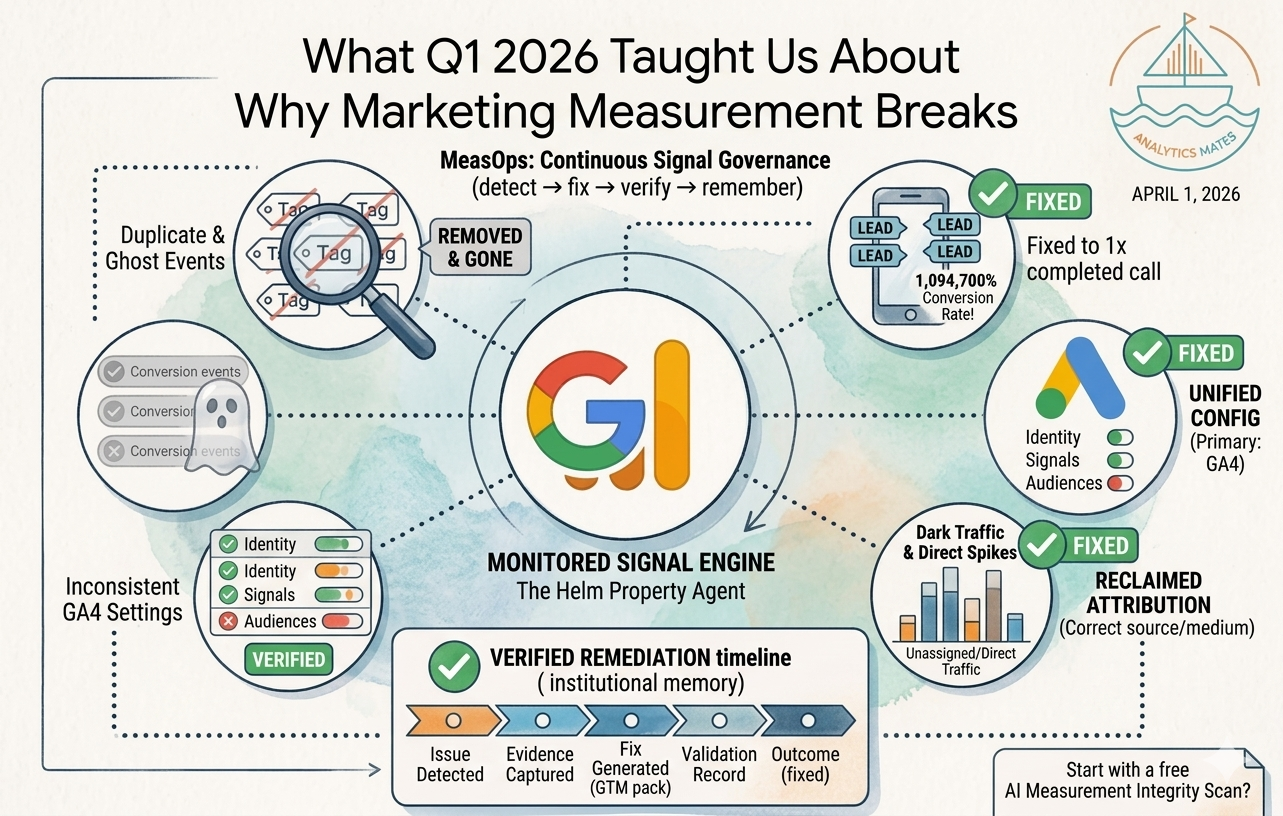

1,094,700%.

That’s the conversion rate per user we found on a single key event during a Q1 audit. One user. Ninety days. 10,947 conversions attributed to that one person.

That number came from a real property. It wasn’t a bot. It was a call-tracking tag configured to fire on every page load instead of on completed calls. Every scroll, every reload, every back button triggered another “conversion.” The call never had to happen.

Google Ads was running Max Conversions on that property. The bidding algorithm trained on that signal. Budget allocation, bid adjustments, and optimization decisions were all based on an event that had no relationship to an actual customer picking up a phone.

This wasn’t a reporting issue. It directly influenced spend.

In an AI-driven ad environment, measurement doesn’t describe performance. It controls it. The signal you send becomes the input the system uses to decide what to do next. If the signal is wrong, the system doesn’t pause or question it. It scales the mistake.

That example was extreme, but it wasn’t unique. We saw the same pattern across every audit in Q1. The specifics changed. The underlying issue didn’t.

What we set out to build

At the start of the year, the goal was straightforward. Build a better GA4 audit.

Not a checklist. Not a static report. Something that shows what’s broken, explains why it matters, and gives a clear path to fix it in the same session.

That’s what The Helm was in January. A faster, more usable audit workflow.

Then we ran it across real properties.

What the audits actually showed

We reviewed measurement setups across multiple industries: insurance, healthcare, home services, automotive. Different teams, different stacks, different levels of sophistication.

The same failure showed up everywhere.

A home services company had a conversion tag firing on page load after a site update changed the DOM structure. The original setup was correct. It stayed in place after the site changed. The trigger conditions no longer matched reality. The issue persisted for months.

An insurance property had eighteen configured key events. Five were active. The rest remained in GA4 but no longer fired. Reporting referenced events tied to an outdated funnel.

A healthcare system tracked referral, consult, and outcome as separate steps. Only the first event remained active after a migration. The rest dropped off without being replaced.

An automotive brand had consistent GA4 data but outdated conversion imports in Google Ads. The analytics layer and the ad platform were no longer aligned.

None of these were initial setup failures. They were correct at one point in time.

The systems changed. The measurement did not.

We started referring to this pattern as signal drift.

Signal drift describes what happens when tracking degrades over time without a clear breaking point. Tags continue to fire, but not under the right conditions. Events remain configured, but no longer reflect actual behavior. Attribution still exists, but points to outdated paths.

The failure is not in configuration. It’s in maintenance.

Where this breaks across platforms

GA4 is only one layer in the system.

The same signal feeds into multiple downstream systems: Google Ads through conversion imports, Meta through CAPI, and CRM platforms through offline attribution.

When the signal drifts, it doesn’t break in one place. It diverges.

GA4 reports one number. Google Ads reports another. Meta reports a third. Each platform processes the same flawed input through its own model. The discrepancies compound over time.

The platforms making optimization decisions do not reconcile these differences. They continue to operate based on the data they receive.

Why audits are the wrong unit of work

Most teams approach measurement as a periodic task. Run an audit, fix what’s found, and move on.

That approach assumes failure is visible and immediate.

Signal drift does not behave that way. It degrades gradually. Conversion rates shift slightly. Attribution changes distribution. Performance looks plausible enough to avoid triggering concern.

Meanwhile, bidding systems continue to optimize.

In a manual environment, a human might question unexpected changes. In an automated environment, the system adjusts spend based on whatever signal it receives. If the signal is inflated, duplicated, or misaligned, the optimization follows.

This creates a delayed cost. The issue does not appear as a failure. It appears as underperformance.

The structure of the problem does not match the structure of an audit.

What we built instead

The shift we made in Q1 was moving from audits to operations.

Instead of asking, “Is this setup correct?” the question becomes, “Is this system still behaving correctly right now?”

That requires a different model.

The approach we landed on is simple:

Detect → fix → verify → remember

Detection identifies when signals move outside expected patterns. That can be volume changes, abnormal conversion rates, or event behavior that no longer aligns with user actions.

Fixes are generated as specific implementations. That can be a GTM update, a configuration change, or a defined set of steps.

Verification checks whether the change produced the expected outcome using the same data source that surfaced the issue.

Memory records the issue, the fix, and the validation result, creating a history of how the property has changed over time.

This replaces a one-time audit with a continuous system.

Where Q2 goes from here

Three areas follow directly from what we saw in Q1.

Paid media signal monitoring extends validation into Google Ads and Meta. This includes checking conversion parity, validating imports, and identifying attribution drift at the platform level.

Portfolio-level visibility addresses the structural problem agencies face. Managing multiple properties requires a system that surfaces risk across accounts without requiring individual inspection.

The API layer allows this system to integrate into existing workflows. Alerts, reporting, and ticketing can connect directly to the measurement layer instead of relying on manual checks.

The question that matters

When did your conversion tracking last change?

And how would you know if it stopped working tomorrow?

Not when you would notice performance changes. Not when reporting looks inconsistent.

When would you detect the issue at the signal level?

If there isn’t a clear answer, the risk isn’t theoretical. It’s already present.

Next step

If you’re running paid media, the practical question is simple:

What are your bidding systems optimizing toward right now?

If the answer isn’t clear, that’s the starting point.

Run the audit:

https://app.analyticsmates.com

See Article Images